Can AI support thematic analysis or does using it mean giving up interpretive authority over your data?

This question comes up constantly among qualitative researchers, and it deserves a serious answer. A recent peer-reviewed study by Ayik et al. (2026), published in Qualitative Inquiry, takes a careful empirical look at exactly this: comparing four AI-based tools for thematic analysis against a rigorously conducted human reference analysis following Braun and Clarke’s six-phase thematic analysis (TA) framework.

MAXQDA AI Assist is a great Choice for Thematic Analysis

The findings are nuanced, practically useful, and worth unpacking for anyone considering how to thoughtfully incorporate AI into their qualitative workflow. We want to be upfront: one of the tools evaluated is MAXQDA AI Assist. We’ll share what the study found, including where other tools showed strengths and where all tools fell short because the goal here is to give you information that helps you make good methodological decisions, not to oversell a product.

The Study: Four AI Tools, One Human Baseline

The researchers worked with a validated dataset from a qualitative study on formative assessment practices in K-12 STEM classrooms with multilingual learners – specifically, qualitative data collected from 25 in-service teachers as part of a U.S. Department of Education–funded project. Crucially, the human reference analysis had been completed before this study began, as part of separate ongoing research by the same lead author. This gave the team a thoroughly reflected interpretive baseline to compare against and not a performance benchmark or definitive truth, but a carefully constructed prior analysis.

Four AI tools then analyzed the same data independently, with no access to the human-developed themes:

- ChatGPT-4o

- QInsights

- ATLAS.ti AI

- MAXQDA AI Assist

The team systematically examined where AI outputs converged with the human analysis, where they diverged, and importantly how each tool’s analytic logic shaped what it produced.

What the Study Found

The headline finding is clear: all four tools identified relevant thematic patterns and showed at least partial convergence with the human analysis. They also delivered substantial time savings – the human analysis required over 150 hours, while AI outputs were generated in a fraction of that time.

But none of the tools fully matched the interpretive depth, reflexivity, or conceptual integration of the human reference analysis.

One finding stands out in the numbers. Using a conceptual match criterion against six human-coded themes, MAXQDA AI Assist produced three exact matches (50% of human themes), while ChatGPT-4o, QInsights, and ATLAS.ti AI each produced one exact match (16.7%). All tools produced partial alignments for the remaining themes. No tool produced hallucinated content likely due to prompting that explicitly instructed each tool to rely solely on the provided dataset.

Importantly, the study doesn’t frame AI as a failed approximation of human analysis. Reflexive thematic analysis is a fundamentally constructive practice – themes aren’t discovered in data, they’re actively built through a researcher’s interpretive engagement with it. AI can support that process. It cannot replicate it.

How the Four Tools Differ – Including Their Epistemological Orientations

One of the study’s more thought-provoking contributions is its attention to the epistemological tendencies of different tools. Not just their outputs, but the underlying logic by which they construct meaning. This matters because your choice of AI tool isn’t just a technical decision: it’s a methodological one.

ChatGPT-4o functions as a general-purpose language model operated through prompting. It showed high convergence with the human analysis overall, but the researchers noted it appeared to rely on word frequency when identifying codes. For example, treating a single teacher’s repeated references to a project as separate codes from multiple participants. This pattern-matching behavior reflects what the authors describe as a post-positivist orientation, foregrounding surface regularities rather than interpretive meaning-making.

QInsights takes a dialogic, corpus-based approach. Rather than generating codes, it surfaces themes through conversational querying of your dataset – essentially “QDA without coding,” as it has been described in recent scholarship. The authors found it aligned more closely with an interpretivist stance when not constrained to code production, though its thematic map did not correspond well to the themes it generated, and sub-themes were limited. It may be better suited to exploratory conversational analysis than to end-to-end thematic analysis.

ATLAS.ti AI integrates into an established CAQDAS environment and initially generated 1,001 codes from the dataset. A volume that aligned more closely with human-generated codes than its conversational AI feature, which tended to repeat sub-theme wording as codes. It was the only tool that did not capture the multimodality theme, which appeared clearly in all other analyses. Its reliance on frequency-based extraction leads the authors to characterize it as reflecting post-positivist tendencies similar to ChatGPT. Its thematic map was also the weakest visually, listing research questions without representing the themes or their relationships.

MAXQDA AI Assist emphasizes what the researchers call an “assist-and-review” approach: the AI offers suggestions – summaries, codes, thematic structure – which the researcher then accepts, revises, or rejects. The authors observed no frequency-based or quantitative reasoning in its generated codes, distinguishing it from ChatGPT and ATLAS.ti. Together with QInsights, MAXQDA AI Assist is described as leaning toward interpretivist engagement, foregrounding researcher interaction rather than automated pattern extraction. Its thematic map was the most complex of the four and included explanatory text that clarified relationships between themes and research questions.

The authors’ conclusion here is worth sitting with: the epistemological stance of the researcher and the chosen AI tool should ideally be congruent. Researchers working within an interpretivist paradigm including those conducting reflexive thematic analysis may find tools like MAXQDA AI Assist and QInsights more compatible with their methodology than frequency-driven approaches.

A Note on Scope

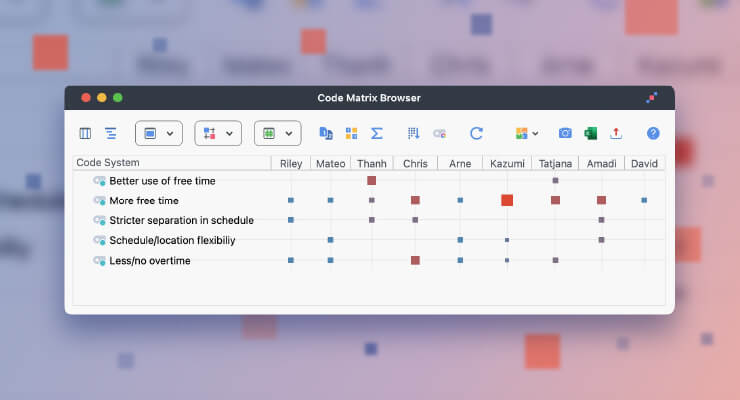

It’s important to read these epistemological characterizations carefully. The study discusses the analytic orientation of specific AI features. It does not evaluate the overall methodological scope of the software systems being compared. Independent of AI Assist, MAXQDA provides comprehensive support for mixed-methods research designs, including integrated quantitative variables, statistical modules, code matrix browser and mixed-methods visualizations. The interpretivist characterization in the study refers exclusively to AI-assisted thematic analysis – not to MAXQDA as a platform.

What “Assist-and-Review” Looks Like in Practice

For researchers less familiar with MAXQDA AI Assist, it’s worth making this concrete with an illustrative example. Imagine you’ve coded 20 interviews from a study on workplace wellbeing. You select a main code like “Coping Strategies” and use AI Assist to suggest subcodes based on the coded segments. It proposes a structured set – say, “Social Support,” “Boundary-Setting,” and “Avoidance Behaviors” – each linked to specific passages in your data.

You review the suggestions in context. Some you accept. Others you rename or merge based on your theoretical framework. One you reject because it conflates two conceptually distinct phenomena that your reading of the data makes clear. Throughout, the suggestions remain traceable to the original segments, and your interpretive decisions remain yours.

This is the key distinction the study draws: AI that structures and accelerates versus AI that substitutes. The former is compatible with reflexive Thematic Analysis – the latter is not.

Thematic Analysis with MAXQDA AI Assist

An Important Debate in the Background

The study situates itself within a genuine and unresolved methodological debate. Virginia Braun herself has publicly expressed concern about AI in reflexive Thematic Analysis, arguing that many pro-AI discourses rest on post-positivist assumptions and that technological efficiency doesn’t automatically mean methodological improvement (Braun, 2025). A collective position statement signed by a large group of international qualitative researchers has gone further, calling for the categorical exclusion of generative AI from reflexive qualitative approaches (Jowsey et al., 2025).

Ayik et al. engage these concerns seriously. Their position is not that AI should be used in reflexive Thematic Analysis, but that when researchers are considering whether to use it, they deserve empirical guidance about what different tools actually do and what the implications are for their epistemological commitments. Whether AI belongs in your workflow at all is a question each researcher must answer in relation to their own methodological stance.

Practical Implications for Your Research

Based on the study’s findings, a few practice-oriented takeaways emerge for researchers considering AI in their qualitative work:

The choice of AI tool is also an epistemological choice. Frequency-based tools may suit mixed-methods researchers or those working with large datasets where descriptive pattern-finding is a goal. Interpretivist researchers conducting reflexive thematic analysis should look for tools that foreground researcher interaction and keep decisions traceable and revisable.

Time savings are real but uneven. ChatGPT and QInsights were fastest to generate outputs. MAXQDA AI Assist required more setup but produced the closest alignment with the human-coded analysis. The right trade-off depends on your priorities.

Prompting matters more than it might seem. The study found no hallucinated content, which the authors attribute at least partly to explicit prompting that restricted the tools to the provided data. How you instruct an AI tool shapes what it produces and that responsibility rests with you.

A transparent, documented workflow is non-negotiable. Whatever tool you use, clearly recording where and how AI contributed and where human judgment intervened is essential for methodological integrity and research transparency.

The Shift Worth Making

For qualitative researchers weighing whether to incorporate AI into their thematic analysis, Ayik et al. offer a reframe. The question isn’t:

“Will I lose interpretive control if I use AI?”

It’s:

“If I use AI, how do I design my workflow so that it genuinely supports – rather than substitutes for – my interpretive work?”

That shift opens up a more productive and honest conversation: one about transparency, epistemological fit, and the conditions under which AI tools strengthen rather than shortcut the work that makes qualitative research meaningful.

Interested in exploring AI Assist in your own MAXQDA projects? Learn more about MAXQDA AI Assist or try it out with a free trial.

Reference

Ayik, B., Gu, D., Zan, Y., Kim, S., & Kim, W. L. (2026). Human vs. AI: Evaluating thematic analysis with ChatGPT, QInsights, ATLAS.ti AI, and MAXQDA AI Assist. Qualitative Inquiry. https://doi.org/10.1177/10778004251412874